How GPTBots Guarantees LLM Stability and Control

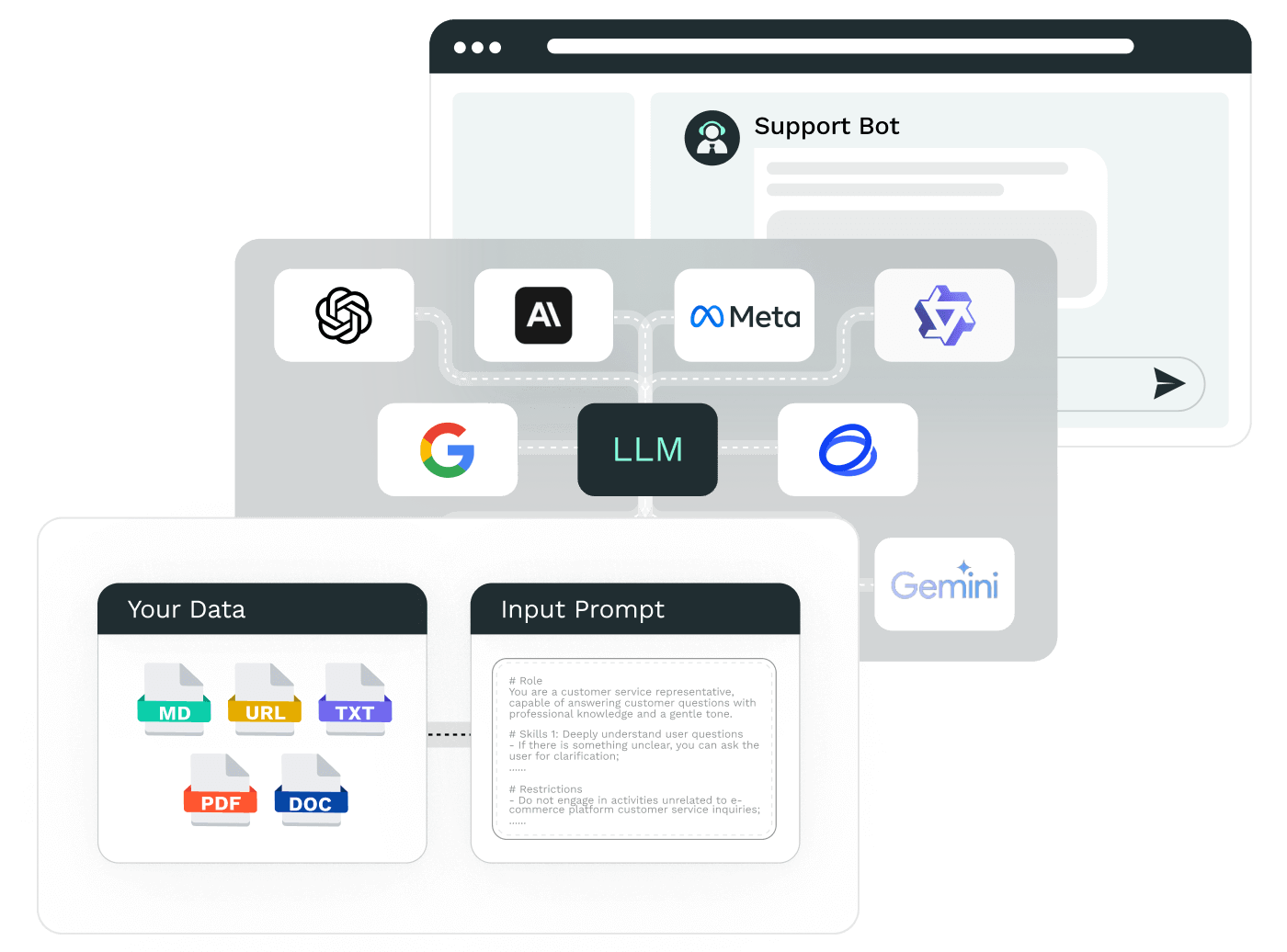

Train LLM with Corporate Data

Integrate enterprise knowledge to minimize hallucinations and ensure real-time accuracy through continuous training.

Assign Specific Roles and Responsibilities to AI Agents

Define specific roles for Agents to execute tasks accurately, ensuring outputs align with industry standards.

Integrate LLM with Your Existing Systems Effortlessly

Equip LLMs with memory, tools, and database capabilities to fully support your business needs.

Enable Collaboration Among Multiple LLMs

Collaborate with multiple LLMs, leveraging their strengths in voice processing, code generation, image recognition, and sentiment analysis to enhance AI response quality and accuracy.

How to Tailor LLMs for Your Business

Choose the right Model

Define Identity Prompts

Set Model Parameters

Integrate Tools

- Depending on your business and local regulations, select the suitable LLM model from options like GPT, GLM, Ernie-Bot, Llama 3, and more.

Choose GPTBots for More Features

Visual Builder

Easily build and configure LLM settings with an intuitive drag-and-drop interface, no programming skills required.

Personalized User Attributes Settings

Allow user attributes and tasks to be stored as permanent memory for the LLM.

Intelligent Code Execution and Computation

Utilize LLMs to run code and perform advanced mathematical calculations, tackling complex tasks with improved response accuracy.

Support for Custom Key Integration

By default, LLM uses GPTBots’ official Key for services. If you have your own LLM Key, you can set it to use your Key for LLM services

Customize data sensitivity settings

Customise and filter sensitive words to ensure secure and compliant answers

Implement Large Language Models into Your AI Agent

Harness the power of LLM with tools offered by GPTBots to elevate your support operations and drive measurable business impact. Experience a remarkable 90% of queries self-served, significantly enhancing customer satisfaction. Self-serve up to 90% of incoming queries in 90+ regional languages to drive contextual, empathetic resolutions while reducing operational costs by 60%.